I've always been curious how bhyve performance compares to other hypervisors, so I've decided to compare it with VirtualBox. Target audience of these projects is somewhat different, though. VirtualBox is targeted more to desktop users (as it's easy to use, has a nice GUI and guest additions for running GUI within a guest smoothly). And bhyve appears to be targeting more experienced users and operators as it's easier to create flexible configurations with it, as well as automate things. Anyway, it's still interesting to find out which one is faster.

Setup Overview

I used my development box for this benchmarking, and it has no additional load, so results should be more or less clean in that matter. It's running 12-CURRENT:

FreeBSD 12.0-CURRENT amd64

as of Oct, 4th.

It has Intel i5 CPU, 16 gigs of RAM and some old 7200 IDE HDD:

CPU: Intel(R) Core(TM) i5-4690 CPU @ 3.50GHz (3491.99-MHz K8-class CPU)

Origin="GenuineIntel" Id=0x306c3 Family=0x6 Model=0x3c Stepping=3

Features=0xbfebfbff<FPU,VME,DE,PSE,TSC,MSR,PAE,MCE,CX8,APIC,SEP,MTRR,PGE,MCA,CMOV,PAT,PSE36,CLFLUSH,DTS,ACPI,MMX,FXSR,SSE,SSE2,SS,HTT,TM,PBE>

Features2=0x7ffafbff<SSE3,PCLMULQDQ,DTES64,MON,DS_CPL,VMX,SMX,EST,TM2,SSSE3,SDBG,FMA,CX16,xTPR,PDCM,PCID,SSE4.1,SSE4.2,x2APIC,MOVBE,POPCNT,TSCDLT,AESNI,XSAVE,OSXSAVE,AVX,F16C,RDRAND>

AMD Features=0x2c100800<SYSCALL,NX,Page1GB,RDTSCP,LM>

AMD Features2=0x21<LAHF,ABM>

Structured Extended Features=0x2fbb<FSGSBASE,TSCADJ,BMI1,HLE,AVX2,SMEP,BMI2,ERMS,INVPCID,RTM,NFPUSG>

XSAVE Features=0x1<XSAVEOPT>

VT-x: PAT,HLT,MTF,PAUSE,EPT,UG,VPID

TSC: P-state invariant, performance statistics

real memory = 17179869184 (16384 MB)

avail memory = 16448757760 (15686 MB)

ada0: <ST3200822A 3.01> ATA-6 device

For tests I used two VMs, one in bhyve and one in VirtualBox. A bhyve VM was started like this:

bhyve -c 2 -m 4G -w -H -S \

-s 0,hostbridge \

-s 4,ahci-hd,/home/novel/img/uefi_fbsd_20gigs.raw \

-s 5,virtio-net,tap1 \

-s 29,fbuf,tcp=0.0.0.0:5900,w=800,h=600,wait \

-s 30,xhci,tablet \

-s 31,lpc -l com1,stdio \

-l bootrom,/usr/local/share/uefi-firmware/BHYVE_UEFI.fd \

vm0

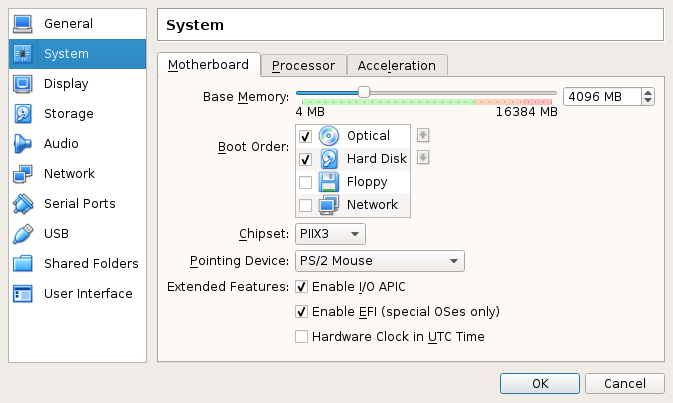

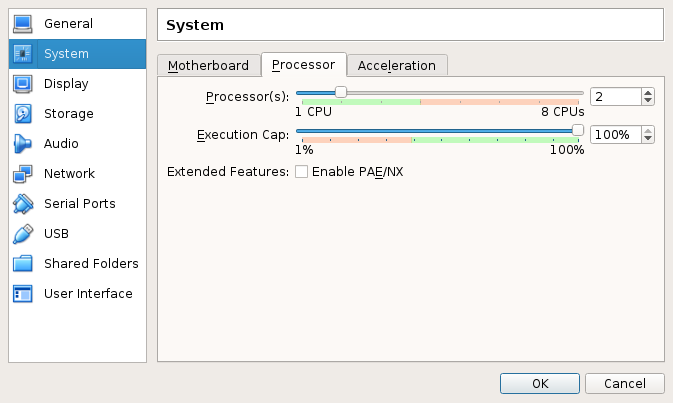

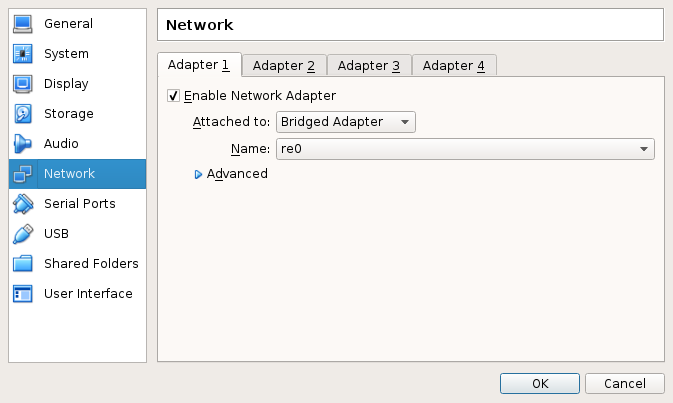

Main pieces of the VirtualBox VM configuration are displayed on these screenshots:

Guest is:

FreeBSD 11.0-RELEASE-p1 amd64

on UFS. VirtualBox version 5.1.6 r110634 installed via pkg(8).

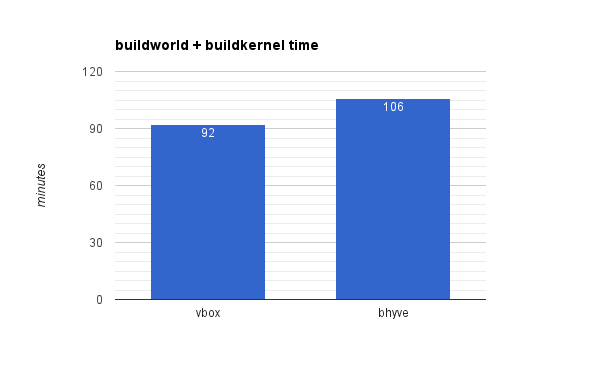

World Compilation

The first test is running:

make buildworld buildkernel -j4

on releng/11.0 source tree.

The result is that bhyve is approx. 15% slower (106 minutes vs 92 minutes for VirtualBox):

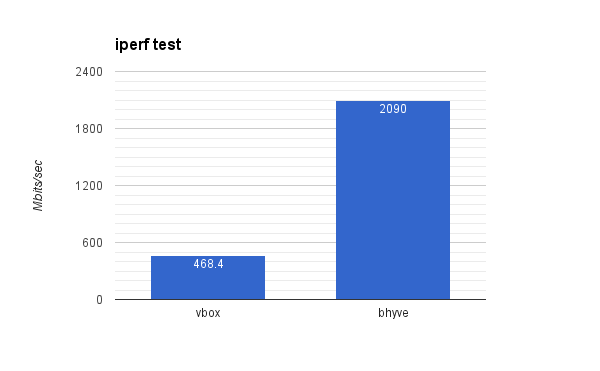

iperf test

The next test is network performance check using the iperf(8) tool. In order to avoid interaction with hardware NICs and switches (with unpredictable load), all the traffic for testing stays withing the host system:

vm# iperf -s

host# iperf -c $vmip

As a reminder, both bhyve and VirtualBox VMs are configured to use bridged networking. Also, both are using virtio-net NICs.

Result is a little strange because bhyve appears to be more than 4 times faster here:

That seemed to be strange to me, so I ran the test multiple times for both VirtualBox and bhyve, however, all the time the results were pretty close. I'm yet to find out if that's really correct result or something wrong with my testing or setup.

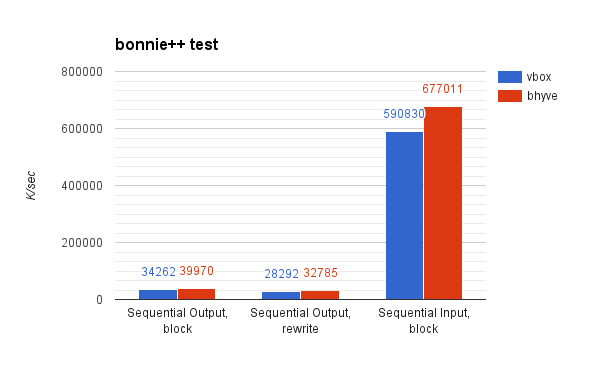

bonnie++ test

bonnie++ is a benchmarking tool for hard drivers and filesystems. Let's jump to the results right away:

This makes bhyve write and read speeds approx. 15% higher than VirtualBox. There's a note though: for VirtualBox VM I used VDI disk format (as it's native for VirtualBox) and SATA emulation because there's no virtio-blk support in VirtualBox. In case of bhyve I also used SATA emulation (I actually intended to use virtio-blk here, but forgot to change commandline), but on a raw image instead.

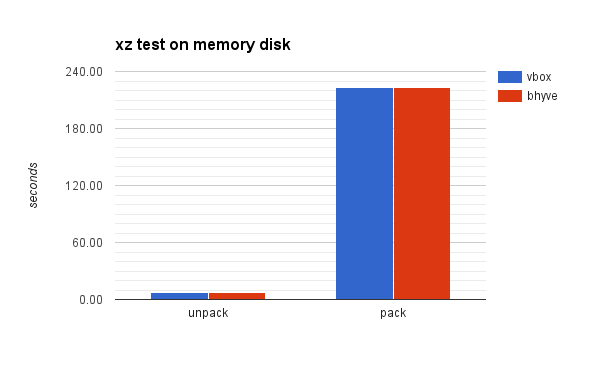

xz(1) on memory disk test

The last test is to unpack and pack an XZ archive on memory disk. I used memory disk specifically to exclude disk I/O from the equation. For a sample archive I choose:

ftp://ftp.freebsd.org/pub/FreeBSD/releases/ISO-IMAGES/11.0/FreeBSD-11.0-RELEASE-amd64-memstick.img.xz

And the commands to unpack and pack were:

unxz FreeBSD-11.0-RELEASE-amd64-memstick.img.xz

xz FreeBSD-11.0-RELEASE-amd64-memstick.img

Results are almost the same here:

Summary

- CPU and RAM performance seems to be identical (though it's not clear why buildworld takes longer on bhyve)

- I/O performance is 15% better with bhyve

- Networking performance is 4x better with bhyve (this looks suspicious and requires additional research; also, I'm not familiar with bridged networking implementation in VirtualBox, maybe it could explain the difference)

Further Reading

- RAW commands output used during the testing

- bonnie report generated using bon_csv2html(1)

- https://www.freebsd.org/doc/handbook/virtualization-host-virtualbox.html

- https://www.freebsd.org/doc/handbook/disks-virtual.html

PS: Initially I was going to use phoronix-test-suite. However, it appears that a lot of important tests fail to run on FreeBSD. The ones that did run, such as apache or sqlite tests, do not seem very representative to me. I'll probably try to run similar tests but with Linux guest.

This comment has been removed by the author.

ReplyDeleteGreat article man!

ReplyDeleteThe cause of your network performance issues in vbox was likely due to the type of virtualized NIC used. I can't recall off hand which is default, but there's a huge difference between, say, "E1000" or 3com or something else.

ReplyDeleteYeah, I've tested other NIC drivers (e1k and virtio specifically) in the follow up post (http://empt1e.blogspot.ru/2016/11/bhyve-vs-virtualbox-benchmarking-part2.html).

Delete